Introduction to Ethical Issues in AI

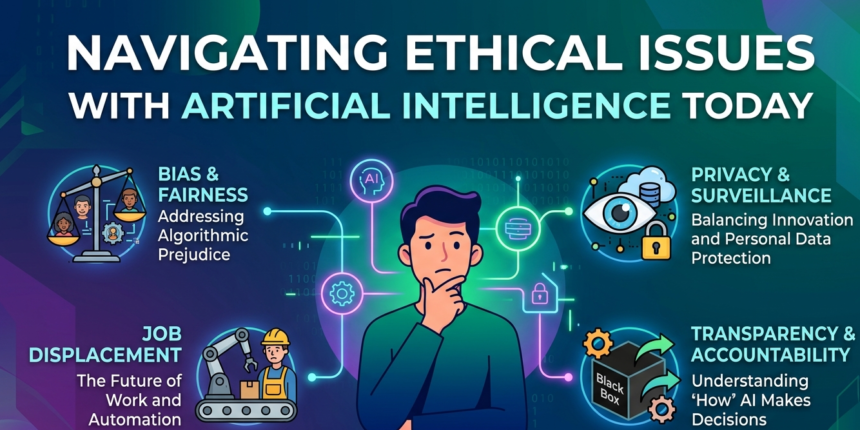

Artificial Intelligence (AI) has emerged as a transformative force across diverse sectors, revolutionizing how we approach problems and making decisions. As AI systems become increasingly sophisticated, integrating deep learning and neural networks, the ethical implications of these technologies have garnered significant attention. The rapid advancement and deployment of AI raise crucial ethical concerns that developers, policymakers, and society at large must confront.

At the core of these ethical issues lies the question of accountability. Who is responsible for the decisions made by AI systems? As machines are granted more autonomy, determining responsibility when an AI system fails or causes harm becomes complex. This conundrum becomes even more pronounced in high-stakes applications, such as autonomous vehicles or healthcare diagnostics, where errors can lead to catastrophic outcomes. Hence, understanding the extent of accountability is essential in the responsible implementation of AI.

AD

Furthermore, biases present in data can lead to discriminatory outcomes in AI algorithms, posing ethical dilemmas related to fairness. AI systems trained on biased datasets can inadvertently perpetuate existing social inequalities, affecting marginalized communities significantly. Addressing this issue requires a thorough examination of the data sources and methodologies used to train these systems, ensuring that AI applications promote equity rather than exacerbate disparities.

The intersection of privacy and AI also raises ethical concerns, particularly regarding data collection and surveillance. With AI’s capacity to process vast amounts of personal information, implementing robust data protection measures while balancing innovation is a critical challenge. The necessity of transparency in AI operations further complicates the quest for ethical standards, as users must understand how AI systems function and make decisions impacting their lives.

In conclusion, as we delve deeper into the implications of artificial intelligence, acknowledging and addressing these ethical issues is paramount to fostering a balanced, equitable, and accountable AI landscape. The discussions surrounding these matters will shape the future of technology and its role in society.

Bias and Discrimination in AI Systems

Artificial Intelligence (AI) systems have increasingly been incorporated into various aspects of daily life, such as hiring processes, law enforcement, and lending decisions. However, these systems are not immune to the biases and discrimination that exist within society. Evidence has shown that AI can perpetuate and even exacerbate existing biases based on race, gender, and socioeconomic status. This raises critical ethical concerns regarding the fairness and equality of AI implementations.

For instance, there have been cases where facial recognition technology has demonstrated lower accuracy rates for individuals with darker skin tones compared to those with lighter skin tones. Studies indicate that these systems misidentify people of color at alarmingly higher rates, leading to wrongful accusations and unjust treatment. Similarly, algorithms used in hiring processes often favor candidates based on biased historical data, which can disadvantage women or minority applicants, perpetuating a cycle of discrimination.

The root cause of such biases often lies in the training data used to develop these AI models. If the data reflects historical inequalities or biases, the AI system learns and reproduces these same patterns. For this reason, it is imperative to ensure that training datasets are comprehensive and representative of diverse populations. Furthermore, continuous monitoring and evaluation of AI systems must be undertaken to identify and rectify instances of bias.

Addressing bias in AI involves not only the technical refinement of algorithms but also a commitment to ethical standards and practices. Engaging diverse teams in the development of AI systems is essential to recognize and challenge potential biases proactively. By prioritizing fairness and inclusivity in AI design, society can harness the potential of AI while minimizing harm and discrimination.

Privacy Concerns and Data Security

In recent years, the integration of artificial intelligence (AI) in various sectors has raised significant concerns regarding user privacy and data security. With AI systems often relying heavily on vast amounts of data to function optimally, the collection, management, and potential misuse of this data has led to a myriad of ethical dilemmas. One of the core issues surrounding AI is the extent and manner in which personal information is harvested and utilized. As organizations deploy AI technologies, they may collect sensitive data without adequate consent from users, leading to potential breaches of trust and privacy.

Furthermore, the phenomenon of surveillance has escalated with the advent of sophisticated analytics powered by AI. Governments and corporations are increasingly using AI-driven surveillance technologies to monitor individuals, which raises alarming implications for civil liberties and personal freedoms. The fine line between ensuring security and infringing on privacy becomes particularly blurred when AI systems operate without transparent frameworks guiding their function. This lack of oversight can lead to the unauthorized sharing and exploitation of personal data, consequently placing users at risk.

There are myriad ethical considerations regarding consent and data usage in the age of AI. Users often remain unaware of how their data is being stored, analyzed, or shared with third parties, thus complicating the very notion of informed consent. Organizations are tasked with the responsibility to not only respect but actively protect user privacy within their AI practices, which necessitates the implementation of robust data protection protocols and transparent policies. These measures include encryption, data anonymization, and adhering to legal standards, to ensure a more ethical approach to data security in the context of AI.

Transparency and Accountability in AI

In the rapidly evolving field of artificial intelligence, the concepts of transparency and accountability have emerged as critical issues. As AI systems increasingly influence various sectors such as healthcare, finance, and criminal justice, understanding their decision-making processes becomes paramount. One of the foremost challenges is the ‘black box’ nature of many AI algorithms, where the mechanisms underlying their outputs remain obscure. This lack of clarity can erode trust among users and stakeholders, as it complicates the assessment of an AI system’s decisions.

To address the opacity associated with traditional AI models, the need for explainable AI (XAI) has gained traction. Explainable AI refers to methods and techniques that make the behavior and decisions of AI systems understandable to human users. By harnessing XAI, organizations can demystify their AI systems, allowing users to gain insights into how decisions are made. For instance, in a healthcare setting, an AI tool that predicts patient outcomes should provide practitioners with clear reasoning behind its recommendations. This transparency fosters trust and empowers users to make informed decisions based on AI insights.

Moreover, accountability in AI signifies the responsibility of developers and organizations in ensuring that their systems operate in a fair and ethical manner. Establishing accountability frameworks involves defining the roles and responsibilities of various stakeholders involved in the AI lifecycle—from data collection to model deployment. Such frameworks are essential to guarantee that AI applications adhere to ethical standards and that users can seek redress in cases where AI misjudgments lead to adverse outcomes. By championing transparency and accountability, the field of AI can strive towards more reliable and ethically sound systems.

Job Displacement and Economic Impact

The rapid advancement of artificial intelligence (AI) presents a significant challenge to the global job market and economic stability. As AI systems become more capable, their integration into various industries has the potential to automate tasks previously performed by human workers. This transition can lead to substantial job displacement, especially in sectors such as manufacturing, transportation, and customer service, where repetitive tasks are common.

From an ethical standpoint, companies and governments bear a responsibility to address the ramifications of these changes. It is essential to develop strategies to mitigate the negative impacts of job loss while also ensuring that the workforce is prepared for the evolving economic landscape. Failure to address this issue may not only exacerbate unemployment rates but also widen the income inequality gap.

To navigate the transition toward an AI-driven economy, organizations must invest in employee reskilling and upskilling programs. This can involve providing access to training in complementary skills that enhance human capabilities, enabling workers to take on roles that AI cannot easily replicate, such as those requiring creativity, emotional intelligence, and complex problem-solving. Governments should also play a key role by implementing forward-thinking policies that promote job creation in emerging industries and incentivize businesses to adopt ethical AI practices.

Moreover, discussions surrounding Universal Basic Income (UBI) have gained traction as a potential solution to counteract the economic challenges arising from AI-induced job displacement. By providing a safety net for those impacted, UBI could help stabilize economies while fostering innovation and entrepreneurship.

In conclusion, as AI continues to evolve, the ethical considerations surrounding job displacement and its economic impacts are paramount. Preparing the workforce for new opportunities and responsibly managing the transition are crucial steps for both corporate entities and governments to ensure a balanced future in the wake of AI integration.

Autonomous Weapons and Ethical Warfare

The advent of artificial intelligence (AI) has revolutionized numerous sectors, notably military applications, where it engenders a paradigm shift in warfare strategies. Autonomous weapons systems, capable of independently identifying and engaging targets, pose significant ethical challenges that compel urgent examination. One of the primary concerns surrounding these AI-driven systems is the potential dehumanization of warfare. As machines assume roles traditionally held by human soldiers, the essence of personal accountability in combat appears to diminish, raising fundamental questions about moral responsibility.

Moreover, the deployment of autonomous weapons introduces the risk of unfettered warfare, where decisions regarding life and death may be rendered by algorithms rather than human judgment. This automation can exacerbate existing ethical dilemmas, such as the justification of collateral damage, the necessity of proportionality in attacks, and the overall compliance with international humanitarian laws. As these technologies evolve, the question looms over who bears the responsibility if an autonomous weapon commits an error that results in civilian casualties.

The ethical implications extend further into the arena of decision-making processes encoded within AI systems. While proponents argue that AI can enhance precision and reduce human error, critics contend that a lack of transparency in AI decision-making mechanisms limits accountability. The potential for these systems to operate with insufficient oversight creates a worrying scenario where technological advancements might outpace ethical considerations, leading to unintended consequences in conflict zones.

In the context of the ongoing discussions about the future of warfare, it becomes paramount for policymakers, ethicists, and technologists to engage collaboratively in formulating robust frameworks that govern the use of autonomous weapons. Addressing these ethical concerns is essential to ensure that humanity retains a degree of control over machines that could otherwise engage in lethal actions without safeguarding fundamental moral principles in warfare.

Manipulation and Misinformation

Artificial Intelligence is revolutionizing how information is disseminated across various platforms, including media, advertising, and social networks. However, the ability of AI to curate and manipulate information raises significant ethical concerns, particularly in the context of democratic processes. The algorithms driving AI technologies have the potential to shape public perception and influence decision-making by selectively emphasizing certain narratives while downplaying others.

The rise of misinformation, facilitated by AI-driven tools, poses a substantial threat to informed public discourse. With the ability to generate fake news articles, create deepfakes, and propagate misleading content, AI can easily mislead audiences. These manipulative tactics can polarize communities and erode trust in legitimate information sources, ultimately undermining the foundational principles of democracy.

Moreover, the targeted advertising enabled by AI analytics exacerbates the issue of misinformation. By tailoring messages to specific demographics or user behaviors, advertisers can influence opinions and behaviors in subtle, yet powerful, ways. This form of manipulation can skew political perceptions, with dangerous implications for electoral integrity. In many cases, individuals are unaware that their views and beliefs are being shaped by algorithmically driven content, further complicating the ethical landscape.

To mitigate these ethical challenges, it is essential for developers, policymakers, and societal stakeholders to engage in open discussions about the implications of AI technologies. Promoting transparency in algorithmic processes and ensuring accountability for the dissemination of information will be critical steps toward upholding ethical standards in AI applications. Addressing these manipulation and misinformation concerns proactively is crucial to protecting democratic values and fostering an informed citizenry in the digital age.

AI and Human Rights

The rapid advancement of artificial intelligence (AI) technologies has raised significant human rights concerns that warrant urgent attention. As AI systems become increasingly integrated into various aspects of our lives, the potential for infringing upon fundamental human rights such as freedom of speech, privacy, and equality grows. Specifically, the deployment of AI-driven surveillance systems can lead to violations of an individual’s right to privacy. Governments and private entities utilizing AI for monitoring may collect excessive data without individuals’ consent and analyze it in ways that could undermine personal freedoms.

Moreover, AI algorithms are often influenced by biased data, which can perpetuate discrimination and inequality. This is particularly evident in areas like hiring practices, loan approvals, and law enforcement, where AI tools are implemented without comprehensive oversight. Such practices not only jeopardize the principles of equality but also reinforce systemic biases, thus impacting marginalized groups disproportionately. Ensuring that AI systems are developed and utilized in a manner that respects human rights is crucial for fostering an inclusive society.

The need for ethical guidelines and regulations around AI usage is paramount. These frameworks should prioritize the safeguarding of human dignity and the preservation of individual rights. By establishing clear ethical standards, we can promote accountability in AI development and mitigate risks associated with its deployment. International cooperation may also play a vital role in addressing these issues, as human rights standards should transcend borders. A collaborative approach can drive the formulation of universally accepted principles, thereby ensuring that AI innovation does not come at the expense of fundamental human rights.

Conclusion: The Path Forward in Ethical AI

As we navigate through the complex landscape of artificial intelligence (AI), it is crucial to address the myriad ethical issues that arise from its implementation and use. Concerns such as bias in algorithms, privacy violations, job displacement, and the potential for autonomous decision-making illustrate the pressing need for an ethical framework in AI development. Engaging various stakeholders, including policymakers, technologists, ethicists, and civil society, is essential for creating comprehensive strategies that promote responsible AI practices.

The first step towards ensuring ethical AI is the establishment of clear guidelines and standards that govern AI development and deployment. These standards should prioritize human welfare, accountability, and transparency while encouraging innovation. Policymakers must collaborate with technologists to draft regulations that are adaptable and take into account the dynamic nature of AI technology. This necessitates ongoing dialogue and revision of policies to ensure they remain relevant and effective in the face of rapid advancements in AI capabilities.

Furthermore, fostering an interdisciplinary approach can aid in unearthing diverse perspectives on ethical issues surrounding AI. By involving ethicists and social scientists, the potential for bias and discrimination can be mitigated, ensuring that the technology serves the needs of all populations fairly. It is also imperative to educate and engage the public in discussions about AI, thus promoting informed citizenry capable of contributing to decision-making processes.

Ultimately, the path forward in developing ethical AI lies in cooperation among stakeholders, robust policy frameworks, and a commitment to human-centered design. By embracing these principles, we can harness the transformative power of artificial intelligence while safeguarding societal values and human dignity. This proactive approach will be essential in making certain that AI innovations contribute positively to our world today and in the future.