Introduction to Reinforcement Learning

Reinforcement learning (RL) is a subset of machine learning, focused on how agents should take actions in an environment to maximize cumulative rewards. It contrasts with other machine learning paradigms, such as supervised and unsupervised learning, by emphasizing a learning process where an agent learns through interactions with its environment instead of relying on labeled input-output pairs.

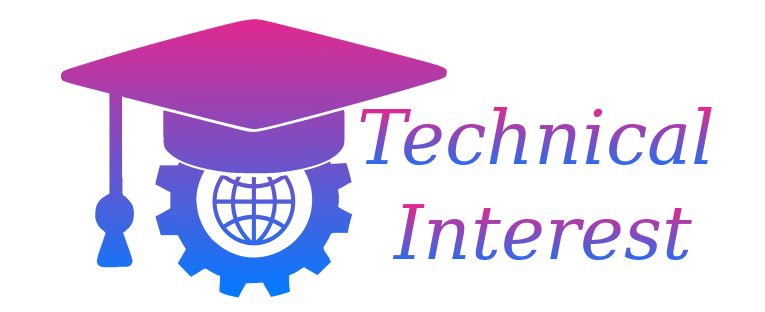

At the core of reinforcement learning are several key components: agents, environments, states, actions, and rewards. An agent is the learner or decision-maker, while the environment encompasses everything the agent interacts with. The state refers to the current situation of the agent within the environment, providing the context in which it operates. Actions are the choices available to the agent; each action leads the agent to a new state. Finally, rewards are feedback signals received after performing an action, guiding the agent toward desirable outcomes.

AD

In reinforcement learning, the agent’s objective is to develop a policy, a mapping of states to actions, that maximizes the expected cumulative reward over time. This process involves exploration, where the agent tries new actions to discover their effects, and exploitation, where it selects actions that it already knows to yield high rewards. Striking a balance between exploration and exploitation is critical, as excessive exploration can lead to suboptimal performance, while too much exploitation may prevent discovery of better strategies.

The appeal of reinforcement learning lies in its applicability to complex problems such as robotics, game playing, and autonomous driving, where decision-making in dynamic environments is crucial. As researchers uncover more about RL, its integration into various fields continues to evolve, highlighting its potential to transform how machines learn and interact with the world.

The Key Components of Reinforcement Learning

Reinforcement learning (RL) is a domain within machine learning, characterized by the interaction between an agent and its environment. This section uncovers the essential components that constitute the framework of reinforcement learning: the agent, environment, states, actions, and rewards. Understanding how these elements interconnect is crucial for grasping the learning process in RL.

The agent is the learner or decision-maker that observes the environment and takes actions to achieve a goal. The agent’s main task is to maximize the cumulative reward over time by determining the best actions based on past experiences. This leads us to the environment, which encompasses everything that the agent interacts with. The environment defines the context within which the agent operates, providing feedback upon receiving the agent’s actions.

Within this framework, the states represent the current conditions or situations of the environment. Each state is a snapshot that encapsulates relevant information that the agent uses to plan its next move. Conversely, actions are the choices made by the agent in response to the current state. The set of all possible actions that an agent can take in a given state is known as the action space.

Finally, rewards serve as the feedback mechanism for the agent, indicating the value of a specific action taken from a particular state. A reward can be positive, reinforcing the action taken, or negative, suggesting a less favorable choice. Over time, the agent learns to navigate the environment more effectively by optimizing the sequence of actions based on the feedback received.

This interplay of agent, environment, states, actions, and rewards illustrates the dynamics of reinforcement learning, aiding in the understanding of how this machine learning paradigm operates.

Understanding the Mechanics of Reinforcement Learning

Reinforcement learning is a paradigm within machine learning that focuses on how an agent can learn to make decisions by interacting with an environment. At its core, reinforcement learning involves a decision-making process where agents take actions to maximize cumulative rewards. This interaction through trial and error allows the agent to learn suitable behavior patterns over time.

One of the fundamental concepts in reinforcement learning is the exploration-exploitation trade-off. Exploration refers to the agent’s attempts to discover new strategies in the environment by trying out different actions. Conversely, exploitation involves utilizing currently known information to maximize rewards based on established strategies. Balancing these two aspects is crucial; too much exploration may lead to suboptimal short-term decisions, while excessive exploitation can hinder the discovery of potentially better long-term strategies.

Learning algorithms drive the efficiency and effectiveness of reinforcement learning systems. Prominent among these are Q-learning and deep reinforcement learning (DRL). Q-learning is a model-free algorithm that seeks to learn the value of actions by estimating the expected utility of state-action pairs. It uses a Q-value table to update knowledge as the agent gains experience in its environment. In contrast, deep reinforcement learning leverages deep neural networks to approximate the Q-values, making it capable of handling more complex environments and large state spaces. This approach has significantly advanced the field, enabling breakthroughs in applications such as gaming, robotics, and autonomous navigation.

In summary, the mechanics of reinforcement learning encompass a dynamic interplay of decision-making strategies, managing exploration and exploitation, and employing advanced algorithms like Q-learning and deep reinforcement learning. These components work collaboratively to enable agents to learn, adapt, and optimize their actions in various environments.

Real-World Applications of Reinforcement Learning

Reinforcement learning (RL) has found a multitude of applications across various fields, demonstrating its potential to tackle complex, dynamic problems. One prominent area is robotics, where RL facilitates the development of intelligent robots capable of learning from their interactions within environments. For instance, robots employed in manufacturing settings use reinforcement learning to optimize tasks such as assembly or quality control, improving efficiency and adaptability.

In the realm of gaming, reinforcement learning has made significant strides through the training of AI agents. Notable examples include Google DeepMind’s AlphaGo, which defeated a world champion Go player by employing sophisticated reinforcement learning techniques. This breakthrough not only illustrates the capability of RL in mastering intricate games but also sets a precedent for applying similar strategies to strategize in other fields.

The financial sector has also begun leveraging reinforcement learning for algorithmic trading. Here, RL algorithms analyze vast datasets to optimize trading strategies, balancing risk and reward. For instance, hedge funds are experimenting with dynamic portfolio management strategies powered by reinforcement learning, allowing them to adapt quickly to changing market conditions based on past performance and future projections.

Healthcare is another promising domain for reinforcement learning applications. Personalized treatment plans can be devised through RL by analyzing patient data and outcomes to determine the most effective therapeutic interventions over time. For example, RL systems have been used to optimize medication schedules for chronic diseases, ensuring better patient compliance and improved outcomes.

Finally, autonomous vehicles heavily utilize reinforcement learning to navigate and make decisions in real-time traffic scenarios. Companies such as Tesla and Waymo apply these principles to enhance vehicle safety and efficiency, learning from millions of simulations and driving experiences to refine their algorithms.

Challenges and Limitations of Reinforcement Learning

Reinforcement learning (RL) has emerged as a powerful approach in artificial intelligence, aiding in various applications from robotics to game playing. However, while there are promising advancements, significant challenges and limitations persist that hinder its practicality and scalability.

One of the most pressing challenges is sample efficiency. RL algorithms often require a vast number of interactions with the environment to learn effective policies. This high sample complexity makes it difficult to apply RL in real-world scenarios, where collecting data can be expensive, time-consuming, or even impractical. For instance, training a robotic system through trial and error can lead to significant wear and tear, posing risks in real-time applications.

Another critical limitation is the complexity of environment modeling. Many RL techniques operate under the assumption that the environment is relatively static and fully observable. However, in real-world situations, environments can be dynamic and partially observable. This disparity between theoretical models and practical applications can result in suboptimal learning and decision-making processes, as the algorithms struggle to adapt to changing conditions.

Additionally, the computational resources required for training RL models can be prohibitive. High-performance hardware, such as GPUs, is often necessary to handle the computations involved in processing multi-dimensional state spaces and executing deep learning algorithms. For small organizations or individual researchers, these resources may be out of reach, limiting the accessibility of advanced RL methods.

Furthermore, the exploration-exploitation dilemma presents another challenge, where algorithms must balance the need to explore new actions with the desire to exploit known rewarding actions. Striking the right balance can be precarious, leading to either suboptimal policies or excessive exploration that fails to converge effectively.

Comparison with Other Machine Learning Techniques

Reinforcement learning (RL) is one of the prominent paradigms within the machine learning landscape, distinguished by its unique approach to learning through interactions with the environment. Unlike supervised learning, which relies on a labeled dataset to train a model, or unsupervised learning, which seeks to find patterns or clusters in unlabeled data, reinforcement learning emphasizes the importance of trial and error. In RL, an agent learns to make decisions by receiving feedback in the form of rewards or penalties based on its actions.

Supervised learning involves training algorithms on a set of input-output pairs, effectively teaching the model to predict outcomes based on historical data. This technique is best suited for tasks where past information can directly guide future predictions, such as image classification or sentiment analysis. In contrast, unsupervised learning operates on datasets without labeled responses, focusing instead on uncovering hidden structures. Common applications include clustering and anomaly detection.

Reinforcement learning, on the other hand, is particularly effective in dynamic environments where decision-making is sequential. Use cases for RL include robotics, game-playing, and autonomous systems, where agents must navigate a complex environment and adapt to change. This makes RL suitable for problems that are inherently interactive and require ongoing learning, unlike the more static nature of supervised and unsupervised learning scenarios.

An essential distinction across these techniques lies in their feedback mechanisms. In supervised and unsupervised learning, the feedback is explicit and direct, whereas in reinforcement learning, feedback is often delayed and sparse, necessitating exploration to uncover optimal strategies over time. Consequently, while each machine learning methodology has its strengths and weaknesses, reinforcement learning stands out due to its focus on adaptive learning from an interactive environment, ultimately broadening the scope of problems that can be effectively addressed.

Future Trends in Reinforcement Learning

As the field of artificial intelligence continues to evolve, reinforcement learning (RL) stands at the forefront of technological advancements. Future trends are likely to be shaped by several key factors, including algorithmic efficiency, interdisciplinary integration, and innovative applications. Enhanced algorithm efficiency will be pivotal for the progression of reinforcement learning systems, particularly in real-time applications. Researchers are continuously striving to develop algorithms that require fewer computational resources, enabling faster training times and extending RL applications to devices with limited processing power.

Moreover, the integration of reinforcement learning with other branches of artificial intelligence, such as deep learning and supervised learning, holds tremendous potential. This cross-pollination can lead to the development of hybrid models that harness the strengths of each discipline. For instance, combining deep learning with RL can facilitate improved decision-making in complex environments, enhancing the ability of agents to learn from high-dimensional data sets. This synergy may yield breakthroughs in various sectors, including healthcare, robotics, and finance.

Looking ahead, significant breakthroughs can be anticipated in the domain of multi-agent reinforcement learning, where multiple agents interact within a shared environment. Research in this area is expected to improve coordination and enable collaborative learning among agents, leading to more sophisticated outcomes. Furthermore, advancements in transfer learning within reinforcement contexts could allow trained policies to be applied to different tasks with minimal retraining, increasing the adaptability and efficiency of RL systems.

In conclusion, as we look toward the future of reinforcement learning, the emphasis on algorithm optimization and interdisciplinary collaboration is likely to pave the way for transformative developments across multiple domains. By addressing current limitations and fostering innovative integration, reinforcement learning will undoubtedly continue to harness its potential and shape the landscape of artificial intelligence.

Resources for Learning More About Reinforcement Learning

For those interested in furthering their understanding of reinforcement learning (RL), a multitude of resources are available that cater to all levels of expertise. From foundational texts to advanced research papers, these materials provide valuable insights into the principles, algorithms, and practical applications of RL.

One widely recommended book is “Reinforcement Learning: An Introduction” by Richard S. Sutton and Andrew G. Barto. This is often seen as the definitive textbook on the subject, covering both the theoretical foundations and practical implementations of reinforcement learning techniques. An understanding of this text lays a solid groundwork for anyone serious about delving into RL.

Additionally, there are several online courses tailored to those who prefer structured learning. Platforms such as Coursera and edX offer courses on machine learning and specifically on reinforcement learning. For instance, the “Deep Reinforcement Learning Nanodegree” on Udacity provides both theoretical knowledge and hands-on projects. These courses usually involve video lectures, quizzes, and practical assignments, making them an engaging way to grasp complex concepts.

Research papers are another invaluable resource for learners who wish to stay updated with the latest advancements in the field. Websites like arXiv.org feature a wealth of preprints on cutting-edge research in RL. Following conferences such as NeurIPS, ICML, and ICLR can also expose students to pioneering ideas and innovative techniques being explored by researchers.

In addition to these materials, there are various online communities and forums such as Reddit and Stack Overflow, where learners can discuss challenges and share insights. Engaging with these communities can enhance understanding through peer learning. By delving into these resources, readers can effectively advance their knowledge of reinforcement learning and its applications in real-world scenarios.

Conclusion and Key Takeaways

Reinforcement learning (RL) has emerged as a transformative approach in the domain of artificial intelligence, enabling machines to learn from interactions with their environment. Throughout this blog post, we have delved into the core concepts foundational to reinforcement learning, such as agents, environments, actions, rewards, and the policies that guide agents in making decisions. The process of learning through trial and error, common in reinforcement learning, mimics the way living beings adapt to their surroundings, enhancing its appeal and practicality in various applications.

Additionally, we explored practical examples where reinforcement learning has demonstrated significant value, including its utilization in robotics, gaming, and automated trading systems. These real-world applications not only showcase the versatility of RL methodologies but also highlight their potential for solving complex problems that were previously out of reach for traditional programming approaches. The adaptability of reinforcement learning allows systems to improve over time, learning from success and failure alike.

Moreover, the advancements in computational power and algorithms have catalyzed the growth of reinforcement learning, propelling it to the forefront of research and development in AI. As industries continue to embrace this technology, the need for a solid understanding of reinforcement learning concepts becomes increasingly essential for professionals aiming to harness its capabilities. As we conclude, it is important to recognize the role of reinforcement learning in shaping innovations across sectors, indicating that this fascinating field warrants further exploration and an open mind towards its expanding possibilities.