Introduction to Computing

Computing, at its core, refers to the process of performing mathematical calculations and processing data to solve problems and generate information. It plays an essential role in virtually every aspect of modern technology, underpinning everything from personal gadgets to complex organizational systems. Historically, the evolution of computing has been marked by significant milestones that have shaped how data is manipulated and understood.

The inception of classical computing can be traced back to the early 19th century with Charles Babbage’s design of the Analytical Engine, which is often seen as the first mechanical computer. Following Babbage, numerous advancements were made, notably the development of electronic computers during World War II. The ENIAC (Electronic Numerical Integrator and Computer), launched in 1945, marked a turning point by demonstrating the ability to execute a wide array of calculations significantly faster than any human could. This breakthrough set the stage for the ensuing rapid development of computers.

AD

The late 20th century introduced the microprocessor, which revolutionized the computing landscape. By integrating the functions of a computer’s central processing unit onto a single chip, this innovation led to the proliferation of personal computers, making technology accessible to the general public. The introduction of the internet further transformed computing, fostering connectivity and the exchange of information on an unprecedented scale. With these advancements, classical computers have become more powerful and efficient, solidifying their place in everyday life and business processes.

As we stand at the crossroads of classical and quantum computing, it is essential to understand these historical developments. This evolution not only highlights the capabilities of traditional computing but also lays the groundwork for discussing the innovative possibilities that quantum computing presents. The transition to quantum systems promises to redefine problem-solving and data processing at a fundamental level.

Understanding Classical Computers

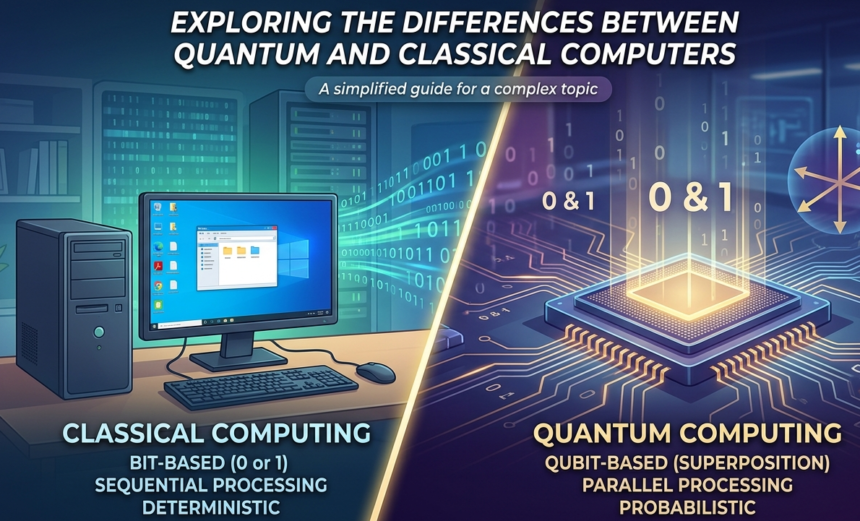

Classical computers are the conventional computing systems that form the backbone of modern technology. They operate based on classical physics principles and use bits as their fundamental unit of data. A bit can represent either a 0 or a 1, and through various combinations, classical computers perform complex calculations and run programs. The architecture of a classical computer typically consists of a central processing unit (CPU), memory, input and output devices, and storage. The CPU is responsible for processing data and executing instructions, while memory and storage are utilized to hold information temporarily and permanently, respectively.

Binary logic is the foundation of classical computing, where operations are conducted based on logical conditions. Utilizing Boolean algebra, classical computers can perform operations such as AND, OR, and NOT, which allow for decision-making processes within the computations. These operations can be chained together to execute more complicated tasks. For instance, binary arithmetic facilitates calculation processes, yielding outputs that drive various applications ranging from simple spreadsheet computations to intricate simulations and data analyses.

Classical computing has contributed significantly to many fields, exemplified by applications in business, education, healthcare, and research. Systems are utilized for database management, software applications, and algorithmic trading strategies in finance. However, classical computers have limitations, especially in handling problems that require immense computational power or complex logical structures, such as large-scale optimization problems or simulations of quantum systems. As the complexity of tasks increases, conventional systems may struggle to deliver efficient solutions within a reasonable timeframe. Consequently, these limitations highlight the necessity for exploring alternative computing models, such as quantum computers, which promise enhanced processing capabilities for certain types of problems.

Introduction to Quantum Computing

Quantum computing is an advanced field of computer science that leverages the principles of quantum mechanics to process information in fundamentally different ways compared to classical computing. At its core, quantum computing utilizes quantum bits, or qubits, which are the essential building blocks of quantum information, analogous to bits in classical systems. However, whereas classical bits can exist in a state of either 0 or 1, qubits can exist in multiple states simultaneously thanks to the principle of superposition.

Superposition allows qubits to perform multiple calculations at once, thereby exponentially increasing computational power. For instance, while a traditional computer with multiple bits will process information sequentially, a quantum computer can explore numerous potential outcomes simultaneously due to the qubits’ capability to represent a combination of 0 and 1. Additionally, the phenomenon known as entanglement enhances the power of quantum computing further. When qubits become entangled, the state of one qubit directly influences the state of another, regardless of the distance separating them. This unique property enables coordinated processing that classical computers are incapable of achieving.

The significance of quantum computing lies in its potential to solve complex problems that dominate fields such as cryptography, optimization, and drug discovery. For example, what would take classical computers thousands of years to calculate may be achieved in mere moments by a quantum computer. This leap forward in computational prowess has generated considerable interest within the scientific community, prompting extensive research and investment in quantum technologies. The move towards harnessing quantum computing signals a transformative shift that could redefine the limits of processing power, providing solutions to previously intractable problems.

Key Differences Between Classical and Quantum Computing

Classical and quantum computing represent two fundamentally distinct approaches to data processing and information management. At the core of these differences lies the manner in which data is represented and manipulated. Classical computers use bits as the basic unit of data, which can exist in a state of either 0 or 1. In contrast, quantum computers employ qubits, which can exist simultaneously in multiple states due to a property known as superposition. This characteristic enables quantum computers to process vast amounts of data at once, granting them a significant edge in certain complex computational scenarios.

Processing power is another area where stark contrasts emerge. Classical computing leverages sequential processing, where operations are carried out one after another. This methodology limits the speed and efficiency for certain tasks, especially those involving large datasets or complex algorithms. Quantum computing, by utilizing both superposition and entanglement, allows for parallel processing capabilities, which can exponentially increase performance for specific operations, such as those used in cryptography or optimization problems.

Algorithms in classical and quantum systems differ significantly as well. Classical algorithms are designed for deterministic environments, relying on established logical sequences to yield predictable outcomes. Quantum algorithms, however, exploit quantum phenomena to perform calculations that can solve specific problems much faster than their classical counterparts. For instance, Shor’s algorithm can factor large integers exponentially faster than the best-known classical algorithms, showcasing the potential of quantum supremacy.

Error correction is critical in both computing paradigms, yet it poses unique challenges in quantum systems. Classical computers can employ well-established error-correcting codes to maintain data integrity. In contrast, quantum error correction must account for the peculiar behavior of qubits, requiring innovative methods that differ significantly from classical techniques. These differences, fundamentally rooted in the underlying principles of each computing type, profoundly impact their performance and capabilities, paving the way for new technological advancements.

Strengths of Quantum Computers

Quantum computers present several advantages over their classical counterparts, particularly in their ability to process and analyze complex information at unprecedented speeds. One key strength lies in their proficiency for solving certain types of problems that are computationally intensive for classical computers. Notably, quantum computers excel in optimization problems, which involve identifying the best solution among a vast number of possible combinations.

For instance, in the realm of supply chain management, optimizing logistics is a significant challenge due to the multifaceted interactions between various components. Companies like Volkswagen have started exploring quantum computing algorithms to optimize traffic flow in urban areas, thus improving efficiency and reducing travel time significantly. Their approach uses quantum annealing technology to find optimal routes swiftly, showcasing how quantum methods can tackle real-world complications much faster than classical systems.

Another area where quantum computers shine is cryptography. Quantum algorithms, such as Shor’s algorithm, can factor large numbers exponentially faster than classical algorithms, potentially disrupting traditional encryption methods. This has prompted a reevaluation of data security as the quantum age approaches. Tech giants like Google and IBM are actively researching quantum-safe technologies to prepare for a future where quantum computers could break existing cryptographic protocols.

Furthermore, quantum computers are uniquely suited for simulating quantum systems, which is particularly relevant in the field of chemistry. The complexity of quantum interactions between atoms and molecules often exceeds the capabilities of classical computers. For example, research initiatives have utilized quantum computers to model chemical reactions and material properties, leading to breakthroughs in drug discovery and new material development. This capability to model real-world systems accurately presents a profound opportunity for advancements in various scientific frontiers.

In conclusion, the strengths of quantum computers—ranging from solving optimization problems to revolutionizing cryptography and enabling intricate simulations—highlight their transformative potential across sectors. As technological advancements continue, the ramifications of their deployment promise to redefine problem-solving paradigms and significantly enhance computational capacity.

Quantum computing presents a paradigm shift in computational capabilities, yet it is not without its limitations. One of the primary challenges lies in hardware development. Quantum computers rely on qubits, which are more complex than classical bits. Developing stable qubits that maintain their quantum states long enough to perform calculations is an ongoing challenge. Fluctuations in temperature and electromagnetic fields can cause qubits to lose coherence, leading to errors in computations. Consequently, while some experimental quantum computers have been built, they often struggle with scalability and stability.

Error rates represent another significant limitation in the realm of quantum computing. Current quantum systems are prone to various types of errors, including bit-flip and phase-flip errors. These inaccuracies can accumulate during computation, making reliable calculations difficult. Error correction methods for qubit states exist, but they significantly increase the resource requirements of quantum computers, further complicating their practical implementation.

Additionally, quantum computers require extreme operating conditions, such as ultra-low temperatures close to absolute zero, to function effectively. Such requirements not only complicate the design of quantum computing systems but also raise questions regarding their practicality in everyday applications. This need for specialized environments makes it hard to envision quantum computers as replacements for classical systems in standard computing tasks.

Furthermore, as of now, quantum computing is well-suited for extremely specific tasks, like factoring large numbers or optimizing particular problems, rather than general-purpose computing. Major tech companies and research institutions are investing heavily in quantum technology, but it remains largely in the experimental phase. Until significant breakthroughs are achieved in overcoming these challenges, quantum computers will continue to complement, rather than replace, classical computing systems.

Current Developments in Quantum Computing

Quantum computing is a rapidly evolving field, characterized by significant advancements and ongoing research that is reshaping the way we approach complex computational problems. Recent developments indicate a progress toward practical quantum systems that leverage quantum entanglement and superposition, promising remarkable improvements over classical computing capabilities.

A number of key players are actively contributing to the progression of quantum technology. Notably, tech giants such as IBM, Google, and Microsoft are at the forefront of developing quantum processors and platforms. IBM’s Quantum Experience and Google’s Sycamore processor are exemplary embodiments of these advancements, with both companies investing heavily in creating accessible quantum computing frameworks for diverse applications. Additionally, startups like Rigetti Computing and IonQ are introducing innovative solutions, focusing on hybrid quantum-classical approaches, which facilitate integration into existing computing ecosystems.

Academic institutions are also pivotal in this burgeoning field, with research centers like MIT’s Research Laboratory of Electronics and UC Berkeley’s Quantum Computing Research Group dedicating extensive resources toward the exploration of quantum algorithms and hardware. Collaborative efforts between academia and industry are yielding substantial breakthroughs. For instance, partnerships such as the Quantum Computing Institute established by several universities in collaboration with major corporations are pushing the boundaries of our understanding of quantum behaviors and applications.

Significant milestones, such as the development of error-corrected quantum bits, are indicative of the advancements in stabilizing quantum states, an essential factor for practical computing applications. Research trends are increasingly focusing on scalability and coherence times, which are crucial for building reliable quantum processors. With numerous breakthroughs and cooperative endeavors, the landscape of quantum computing is evolving rapidly, projecting a future where quantum systems might supplement or even surpass the limitations of classical computing.

Future Implications of Quantum Computing

Quantum computing heralds a transformative era for various industries, including finance, healthcare, and artificial intelligence. By leveraging the principles of quantum mechanics, this advanced technology has the potential to vastly outperform classical computers in processing power and speed.

In the financial sector, quantum computing could revolutionize trading strategies, risk assessment, and fraud detection. Traditional models often rely on probabilistic algorithms, which can be significantly enhanced through quantum algorithms capable of analyzing complex datasets at unprecedented speeds. This could lead to more accurate predictions of market fluctuations and improved decision-making processes for investors.

Healthcare is another field poised for radical transformation through quantum technology. The ability to analyze vast amounts of genetic data quickly could accelerate drug discovery and the development of personalized treatment plans. Quantum computing may facilitate breakthroughs in understanding complex biological systems and diseases, ultimately leading to improved patient outcomes.

In the realm of artificial intelligence, quantum computing holds the promise of enhancing machine learning algorithms. By processing large datasets and enabling the optimization of complex algorithms, quantum systems could significantly advance capabilities in natural language processing, image recognition, and predictive analytics. As a result, AI could become more efficient and accurate in performing tasks across diverse applications.

Furthermore, the implications of quantum computing extend to data security protocols. The current encryption methods rely heavily on the computational limits of classical computers; however, quantum computers could easily break these encryptions. This inevitability necessitates a shift toward quantum-resistant security measures, safeguarding sensitive data against potential vulnerabilities.

All considered, the advent of quantum computing represents a paradigm shift that could redefine how industries operate, enhance data security measures, and expand our understanding of complex systems.

Conclusion: The Path Forward

In this discussion on computational paradigms, we have delineated the fundamental distinctions between classical and quantum computers. Classical computers, which operate on bits as the basic unit of information—representing either 0 or 1—have served as the backbone of technological advancement for decades. Their reliable, well-established systems perform a wide range of tasks efficiently, making them indispensable in various sectors, from finance to data management.

In contrast, quantum computers, leveraging the principles of quantum mechanics, utilize qubits. These qubits can exist in multiple states simultaneously, permitting quantum computations to solve specific complex problems at unprecedented speeds—tasks that classic computers could take an impractical amount of time to address. As we have noted, quantum computing holds significant potential for advancements in fields such as cryptography, optimization, and pharmaceuticals, with continuous research expecting to further bridge the gap between theory and practical application.

However, it is crucial to acknowledge that classical and quantum systems are not in opposition; rather, they coexist and complement each other. As quantum technology matures, the integration of classical computing resources will remain a vital aspect of computational infrastructure. Understanding how both systems can function in tandem will play a pivotal role in developing more sophisticated algorithms and applications in the future.

As technology progresses, it is imperative for readers to remain abreast of developments in both computing paradigms. The journey of computing is far from over, and staying informed will empower individuals and organizations to harness the potential of both classical and quantum computing effectively.